Machine Learning in Materials Processing & Characterization

Unit 4: From Classical Metrics to Learned Representations

FAU Erlangen-Nürnberg

01. The Language of Microstructures

- How do we describe what we see in a microscope?

- Traditionally: Human-driven metrics (grain size, phase fraction)

- Modern approach: Algorithm-driven representations (embeddings)

- Goal: Capture the link between structure and properties — automatically

02. Learning Outcomes

By the end of this unit, you can:

- Quantify the information loss when condensing a micrograph into a stereological scalar

- Choose between hand-crafted descriptors and learned representations for a given materials task

- Encode a microstructure as input to a neural network (raw pixels, feature vectors, n-point statistics)

- Apply the MFML neural-network toolkit to a tabular materials-property prediction task

- Interpret what an MLP “learns” about a microstructure — and where this differs from human-readable descriptors

- Recognize the failure modes of MLPs on micrographs that motivate next week’s CNN material

Note

This morning’s MFML lecture covered the architecture, forward pass, and activation taxonomy of MLPs. We’ll recap that in 3 slides and then spend the rest of the lecture on what’s specific to materials data.

Part 1: Classical Microstructure Metrics

Slides 03–10

03. Stereology: Quantifying Structure

Stereology: The science of estimating 3D properties from 2D sections.

- Volume fractions (\(V_V\)): How much of each phase?

- Surface area per volume (\(S_V\)): Interface density

- Mean intercept length: Grain size estimation

How we’ve characterized structure for over a century — the backbone of materials standards (ASTM, DIN).

04. Stereological Descriptors in Practice

Scalar features that condense complex 3D structures into single numbers:

- Grain size → one number

- Phase fraction → one number

- Porosity → one number

These are our “hand-crafted features.”

05. Hand-Crafted Shape Descriptors

Features based on human intuition:

- Shape: Aspect ratio, circularity, tortuosity

- Distribution: Nearest-neighbor distances, clustering index

- Texture: Orientation distribution function (ODF)

- Strength: Physically interpretable — you can explain them to a colleague

- Weakness: Biased — you only find what you look for. What if the key descriptor hasn’t been invented yet?

06. The Information Bottleneck

- A high-resolution micrograph: \(10^6\) pixels of information

- ASTM grain size: reduces this to one number

- We discard 99.99% of the information

Question: Can we keep more information while remaining computationally efficient?

Answer: Yes — learned representations (embeddings) compress images into vectors that preserve the task-relevant information.

07. PSPP as a Feature Space

Processing → Structure (Metric \(d\)) → Property (Hardness \(H\))

Hall-Petch: \(H = H_0 + k \cdot d^{-1/2}\)

A linear model using a physical descriptor — works well for simple cases!

08. Why Not Just Metrics?

Modern materials defy simple descriptions:

- High-entropy alloys: 5+ principal elements, complex phase mixtures

- Nanocomposites: Multi-scale structures spanning nm to µm

- Additive manufacturing: Spatially varying microstructures, not statistically homogeneous

Simple metrics are insufficient to describe the “S” in PSPP completely. We need richer representations.

09. The Paradigm Shift

| Approach | Input | Features | Limitations |

|---|---|---|---|

| Classical | Micrograph → Metrics | Hand-crafted | Information loss |

| Modern | Micrograph → Network | Learned (embedding) | Need data |

From “Predicting with Descriptors” to “Learning Representations”

The model decides what features matter — not the scientist.

10. Part 1 Recap

- Classical metrics (stereology) are interpretable but lossy

- Hand-crafted features encode human knowledge — but may miss key descriptors

- The information bottleneck: \(10^6\) pixels → 1 number

- Modern materials need richer representations than scalar metrics

- The solution: let the model learn the representation

Part 2: MFML Recap — The NN Toolkit

Slides 11–14

11. What MFML covered this morning

- Neuron = affine map + non-linear activation: \(a = \sigma(\mathbf{w}^T\mathbf{x} + b)\)

- MLP = stack of such layers; learns its own features instead of relying on hand-crafted ones

- Universal approximation: an MLP with one hidden layer can approximate any continuous function — given enough neurons and data

- Training by gradient descent on a loss function — the chain rule (“backprop”) gives gradients efficiently

Note

We will not re-derive these here. If anything is unclear, the MFML deck (Unit 4) is your reference.

12. Activation function cheat-sheet

| Layer | Task | Activation | Why |

|---|---|---|---|

| Hidden | General | ReLU / Leaky ReLU | Cheap, no saturation for \(z > 0\) |

| Output | Regression (e.g., hardness) | Linear | Predictions span \(\mathbb{R}\) |

| Output | Binary classification | Sigmoid | Output ∈ (0, 1) interpreted as probability |

| Output | Multi-class classification | Softmax | Outputs sum to 1 over \(C\) classes |

Materials example: phase classification — Softmax outputs \(P(\text{FCC}) = 0.72\), \(P(\text{BCC}) = 0.25\), \(P(\text{HCP}) = 0.03\).

13. The training loop in one slide

The pieces — loss, gradient, update rule — are universal. What changes between materials problems is what goes into X, what y means, and how we encode the structure. That’s the rest of this lecture.

14. Why we use this toolkit on materials data

The MFML deck showed that an MLP can fit any function. The materials questions are:

- What should we feed it? — micrograph pixels? hand-crafted descriptors? n-point statistics?

- How do we label it? — labels are scarce and expert-time-bounded

- How do we know it learned something physical? — interpretability matters in science

- When does the toolkit break on real micrographs? — motivates CNNs (next week)

Part 3: Encoding Microstructures for ML

Slides 15–24

15. The encoding question

Before any network can learn from a microstructure, we must turn it into a vector or tensor. The choice of encoding decides what the model can learn.

| Encoding | Input shape | What the model sees |

|---|---|---|

| Hand-crafted descriptors | \(\mathbb{R}^{D}\) (small) | Pre-distilled features |

| Raw flattened image | \(\mathbb{R}^{H \cdot W}\) (huge) | Every pixel, no spatial prior |

| Patch / windowed statistics | \(\mathbb{R}^{N \times D}\) | Local texture distributions |

| n-point statistics | \(\mathbb{R}^{D}\) | Spatial correlations, translation-invariant |

| Raw image with conv inductive bias | \(\mathbb{R}^{H \times W \times C}\) | Spatial features (next week) |

16. Hand-crafted descriptors revisited

The classical features from Part 1 — grain size, aspect ratio, phase fraction, ODF coefficients — are just one possible vector representation of the microstructure.

Their advantage: physically interpretable. You can name every component of the input vector.

Their disadvantage: information lossy and biased. Whatever physics you didn’t think to encode, the model cannot recover.

17. Two-point statistics

The two-point correlation function \(S_2(\mathbf{r})\):

\[S_2(\mathbf{r}) = P\bigl(\text{phase}(\mathbf{x}) = \alpha \;\wedge\; \text{phase}(\mathbf{x}+\mathbf{r}) = \alpha\bigr)\]

- Probability that two points separated by \(\mathbf{r}\) are both in phase \(\alpha\)

- Captures characteristic length scales, anisotropy, ordering

Why this is a good NN input: it’s translation-invariant by construction, low-dimensional (a few hundred numbers), and physically meaningful.

18. n-point statistics and microstructure functions

- \(S_2\) is the simplest of a family of \(n\)-point correlation functions

- Higher-order \(S_n\) capture more morphological detail at the cost of dimensionality

- Frameworks like MKS (Materials Knowledge System) use \(S_2\) as the input to a learned property-prediction network

A practical compromise: feed the network \(S_2\) (informative, compact) rather than raw pixels (noisy, huge).

19. Compositional and process descriptors

For many materials problems, the input is not an image at all — it’s tabular:

- Composition: 5–20 element fractions (e.g., for a steel: C, Mn, Si, Cr, Ni, Mo, …)

- Process parameters: cooling rate, anneal temperature, hold time, atmosphere

- Heat-treatment history: ordered list of process steps

This is the natural setting for an MLP. The input vector is small (\(D \sim 10\)–\(50\)), interpretable, and has well-defined physical units. Standardize each feature (\(\mu = 0\), \(\sigma = 1\)) before feeding the network.

20. Tabular vs structural inputs

| Input type | Typical \(D\) | Best architecture | Example task |

|---|---|---|---|

| Composition + process (tabular) | 10–50 | MLP (this lecture) | Predict hardness from alloy + treatment |

| Hand-crafted morphological descriptors | 5–50 | MLP | Predict fatigue life from grain stats |

| n-point statistics | 100–1000 | MLP / 1D conv | MKS-style property prediction |

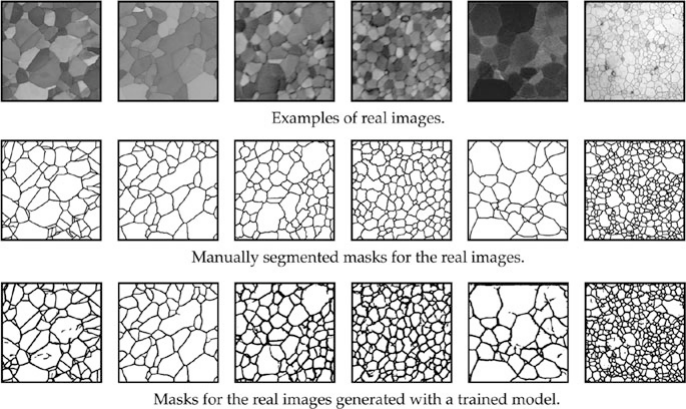

| Raw 2D micrograph | \(10^4\)–\(10^7\) | CNN (next week) | Phase segmentation, defect detection |

21. The information bottleneck, revisited

- Classical pipeline: micrograph → 1 scalar (e.g., ASTM grain size) → linear regression → property

- MLP on hand-crafted features: micrograph → 5–10 descriptors → MLP → property

- MLP on \(S_2\): micrograph → ~\(10^2\) correlation values → MLP → property

- End-to-end CNN (next week): micrograph → CNN → property, no hand-crafted features

Each step retains more of the \(\sim 10^6\) pixels of original information. More information ≠ better model unless you have data to support it — this trade-off drives the rest of the course.

22. Standardization is non-negotiable

A composition feature in [0, 1] (mass fraction) and a temperature in [300, 1500] K cannot be fed to the same MLP unscaled.

- The loss landscape becomes severely ill-conditioned (recall MFML on Hessian conditioning, next week’s MFML lecture)

- Gradient descent oscillates in the steep direction and crawls in the flat one

- Always: \(\tilde{x}_i = (x_i - \mu_i) / \sigma_i\), where \(\mu, \sigma\) are computed on the training set only and reused at test time

23. Splitting materials data — the specimen rule

- Don’t split crops or pixels randomly — multiple crops from one specimen are not independent

- Do split by specimen / sample / processing batch

- Training set, validation set, and test set must come from disjoint physical samples

This is the single most common mistake in published materials-ML papers. We will revisit it formally in Unit 6 (transfer learning) and in MFML’s generalization week.

24. Part 3 Recap

- The choice of input encoding determines what physics the model can capture

- Hand-crafted descriptors are interpretable but biased

- n-point statistics are a principled middle ground for microstructures

- For composition + process data, tabular MLP input is the natural choice

- Always standardize features and split by specimen — both have direct consequences for gradient flow and generalization

Part 4: Case Study — ASTM Grain Size vs. MLP

Slides 25–34

25. The task

Predict tensile strength of a polycrystalline alloy from its microstructure.

- 200 specimens, each with one calibrated SEM micrograph and a measured tensile strength

- Goal: build a model that generalizes to new specimens, not new crops of seen specimens

26. Three competing models

| Model | Input | Parameters |

|---|---|---|

| Linear (Hall-Petch) | Mean grain size \(d^{-1/2}\) | 2 |

| Linear + descriptors | \(d^{-1/2}\), aspect ratio, porosity, pore-size dispersion | 5 |

| MLP | All 12 hand-crafted descriptors | \(\sim 1{,}000\) |

We use the same 12 descriptors for the linear-extended model and the MLP — so the only difference is how the inputs are combined.

27. Results

| Method | Features | R² (test) |

|---|---|---|

| Hall-Petch | Mean grain size only | 0.72 |

| Linear + descriptors | 12 hand-crafted | 0.81 |

| MLP | 12 hand-crafted | 0.88 |

The MLP gains 7 R² points on the same input as the linear model — the gain comes purely from non-linear feature combinations.

28. What did the MLP discover?

Permutation importance reveals which inputs the MLP actually relies on:

- Largest grain size (not the mean) is the dominant feature — consistent with weakest-link fracture mechanics

- Pore-size dispersion matters more than total porosity — clusters of pores are more harmful than uniformly distributed ones

- Mean grain size appears mid-importance — it captures bulk Hall-Petch behavior but is not the single best predictor

Physical reading: the network rediscovered weakest-link statistics on its own. Hall-Petch was an average; failure is a tail-of-distribution phenomenon.

29. Where the linear model fails

A linear model in \(d^{-1/2}\) predicts the same strength gain whether you halve the mean grain size or halve the largest grain. Physically these are different operations — thermomechanical processing affects the tail of the grain-size distribution differently from the mean.

The MLP captures this because it can compose features non-linearly: e.g., \(\sigma_y \approx f(\bar{d}, d_{\max}, \mathrm{Var}[d])\) with \(f\) free to be non-additive.

30. Discussion: when to prefer the linear model

- Tiny dataset (\(N < 50\)): the MLP overfits; linear regularizes by structure

- Need for extrapolation to compositions/processing outside the training range: linear is more honest about its uncertainty

- Regulatory or safety contexts: linear coefficients can be audited and reported; MLP weights cannot

Materials practice: try the linear baseline first. Only adopt the MLP if it improves cross-validation R² and you can defend its predictions on out-of-distribution test cases.

31. Failure mode: train/test split done wrong

A common but invalid protocol:

- Take 200 micrographs, cut each into 16 crops → 3,200 crops

- Shuffle all 3,200 crops, hold out 20% as test

- Report 0.95 R² on the test set

- The model has seen another crop of the same specimen during training

- The reported R² overstates real-world performance by a wide margin

- A specimen-level split (Part 3, slide 23) on the same data drops R² from 0.95 to 0.72

32. Failure mode: distribution shift across labs

- Train on micrographs from microscope A: R² = 0.88

- Test on the same alloy imaged with microscope B: R² collapses to 0.45

- Why? The MLP latched onto contrast and brightness statistics that differ between detectors

Mitigation: feature engineering that normalizes out instrument-specific statistics, or — more powerfully — domain-randomized training, which we’ll revisit in Unit 6 (transfer learning).

33. Failure mode: scaling to high-resolution micrographs

What if we don’t have hand-crafted descriptors, only the raw image?

- A \(1024 \times 1024\) micrograph flattened into an MLP needs \(\sim 10^9\) parameters in its first layer

- 200 specimens are nowhere near enough to constrain that many weights

- The MLP memorizes pixel patterns rather than learning physics

This is the failure mode that motivates next week’s lecture. CNNs use weight sharing and locality to make the parameter count manageable, allowing us to learn directly from pixels.

34. Part 4 Recap

- On the same descriptors, an MLP beats linear regression because it captures non-linear interactions

- The MLP rediscovered weakest-link statistics — physical insight emerged from learning

- Linear baselines remain valuable for small data, extrapolation, and auditability

- The two showstopping pitfalls — specimen-level splitting and cross-lab distribution shift — apply to any MLP on materials data

- MLPs hit a hard wall on raw images — the motivation for CNNs next week

35. Unit 4 Summary & Next Steps

Key Takeaways:

- Classical metrics are interpretable but lossy — pick them when the physics is well-understood

- Hand-crafted descriptors + MLP is a pragmatic upgrade and the right starting point for tabular materials data

- n-point statistics are the principled bridge between morphology and learned representations

- Specimen-level splits and feature standardization are non-negotiable for honest evaluation

- MLPs fail on raw images — that failure is the motivation for CNNs (Unit 5)

Reading:

- Sandfeld (2024): Ch. 17 (Neural Networks) (Sandfeld et al. 2024)

- McClarren (2021): Ch. 5 (Feed-Forward NNs) (McClarren 2021)

- Neuer (2024): Ch. 4.5 (already read for MFML this morning) (Neuer et al. 2024)

Next Week: Unit 5 — Convolutional Neural Networks for Microstructure Analysis

References

© Philipp Pelz - Machine Learning in Materials Processing & Characterization