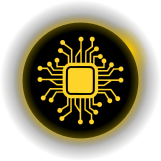

flowchart LR

A["Raw spectra<br>(N × D)"] --> B["Background /<br>baseline subtraction"]

B --> C["Energy / 2θ<br>calibration & alignment"]

C --> D["Normalization"]

D --> E["(optional)<br>reduction / decomposition"]

E --> F["Downstream:<br>quant · phase ID · anomaly"]

style A fill:#2d5016,stroke:#4a8c2a,color:#fff

style D fill:#1a3a5c,stroke:#2a6a9c,color:#fff

style F fill:#5c1a1a,stroke:#9c2a2a,color:#fff

Machine Learning in Materials Processing & Characterization

Unit 9: ML for Characterization Signals

FAU Erlangen-Nürnberg

§1 · Signals & their physics

01. Unit 9 — The Signal Application & Domain Unit

This unit is not a methods unit. The methods were derived elsewhere; here we ask what the signal physics demands of them.

| Method | Owned by (derived in) |

|---|---|

| PCA / SVD | MFML u02 · ML-PC u02 |

| Clustering, autoencoders | MFML u05 · ML-PC u05 |

| NMF | MFML u02 · ML-PC u05 |

| t-SNE / UMAP, latent spaces | MFML u09 |

| MAE / SSL (DINOv2, I-JEPA) | MFML u09 · ML-PC u09b |

Important

We reference these methods. We do not re-derive them. Unit 9 is about background subtraction, calibration transfer, quantification, rotational ambiguity, operando streaming — the things the signal physics forces on you.

Learning outcomes. After 90 minutes you can:

- Explain how the probe physics dictates the structure of XRD / EELS / EDX / XPS / Raman signals.

- Build a defensible spectral preprocessing pipeline (background → calibrate/align → normalize).

- Apply MCR-ALS and reason about rotational ambiguity.

- Turn a latent/peak into a quantified concentration with an error bar (Cliff-Lorimer / ζ-factor).

- Deploy SSL/MAE-pretrained spectral encoders and operando novelty detection.

02. Beyond Images: 1-D Signals

- Most of the course so far: spatial data (micrographs, microstructure maps).

- But many instruments produce 1-D spectral signals — intensity vs. energy / angle / wavenumber:

- XRD — crystal structure via Bragg peaks

- EELS — bonding and electronic structure (edges, fine structure)

- EDX/EDS — elemental composition (characteristic X-ray lines)

- XPS — surface chemistry and oxidation states (chemical shifts)

- Raman — molecular / phonon fingerprints

- Modern instruments collect millions of spectra per experiment — every pixel of a STEM scan is a spectrum (spectrum imaging).

Note

A spectrum with \(N\) channels is a vector \(\mathbf{x} \in \mathbb{R}^N\). Every linear-algebra / ML tool applies directly — but each “dimension” is a physical energy channel, and that is what makes this unit different from generic vector ML.

03. The Nature of Characterization Signals

- High dimensionality — 1024–4096 channels; each spectrum a point in \(\mathbb{R}^{N}\).

- Sparse peaks — most channels are background; the science lives in a handful of channels.

- Continuous backgrounds — bremsstrahlung (EDX), plasmon tails (EELS), fluorescence (Raman).

- Noise — Poisson (shot) noise dominates at low dose; Gaussian detector/readout noise adds on top.

- Variability — peak positions shift with composition; peak shapes change with bonding/oxidation.

- Low intrinsic dimensionality — a sample with \(P\) phases spans \(\approx P\) + a few directions, \(\ll N\).

Important

The signal-to-noise is Poisson: variance equals the mean. A peak with 100 counts has \(\pm 10\) noise; a background of 10 000 counts has \(\pm 100\). This is why dose, normalization, and background all couple — you cannot treat them independently.

04. Signal Formation: The Physics of the Probe

The signal’s structure is dictated by the physics of the probe — every method in §2–§4 must respect it.

- EELS — ionization edges (sawtooth/hydrogenic) on a steep plasmon / power-law background (\(\sim AE^{-r}\)); near-edge fine structure (ELNES) encodes valence.

- EDX — characteristic lines (Gaussian-ish, detector-broadened) on a bremsstrahlung continuum; absorption + fluorescence distort intensities.

- XRD — Bragg peaks at \(2\theta\) from \(d\)-spacings; instrumental + size/strain broadening (Caglioti, Scherrer); preferred orientation reweights intensities.

- XPS — core-level lines whose binding-energy shift (chemical shift, ~0.1–3 eV) encodes oxidation state; inelastic background (Shirley/Tougaard).

- Raman — sharp phonon lines on a broad, sample-dependent fluorescence background that can dwarf the signal.

Note

Different physics → different background model, different noise, different invariances. There is no universal preprocessing. The pipeline must be chosen per modality.

[FIGURE: five mini-panels, one per modality, each showing the characteristic peak/edge shape sitting on its characteristic background, annotated with the physical origin of each component]

05. Why ML — and Where Manual Fitting Breaks

- Manual peak fitting is slow, subjective, non-reproducible, and does not scale.

- 10 peaks in 1 spectrum: a Friday afternoon. 10 peaks in \(10^6\) spectra: impossible.

- Overlapping peaks make decomposition ambiguous.

- Fe-L and Mn-L (EELS) overlap near ~640 eV; Ti-Kα and Ba-Lα (EDX) overlap near ~4.5 keV.

- Subtle spectral changes encode the science.

- The Fe-L₂,₃ white-line ratio distinguishes Fe²⁺ from Fe³⁺; a 0.3 eV onset shift = an oxidation-state change.

- Batch effects — calibration drift, detector aging, beam damage, contamination — break naïve models.

Important

Pointer. The methods that solve these — PCA/SVD (MFML u02, ML-PC u02), autoencoders / VAE / conv-AE (MFML u05, ML-PC u05), t-SNE/UMAP & latent spaces & MAE/DINOv2/I-JEPA (MFML u09) — were all derived there. From here we apply them to what the signal physics demands.

§2 · The spectral preprocessing pipeline

06. The Pipeline — Garbage In Dominates Everything

- Every box is a physics-informed choice, not a default. Order matters (slide 04).

- The reduction/decomposition box is the referenced methods (PCA/AE/NMF) — it is one box, not the unit.

Important

The dominant error term in any spectral-ML result is almost never the model — it is the preprocessing. A 2% baseline error swamps the difference between PCA and a transformer. §2 is the unit’s real content because almost none of it exists in the methods units.

07. Baseline / Background Subtraction

Algorithmic (model-free)

- SNIP — Statistics-sensitive Nonlinear Iterative Peak-clipping (Ryan et al. 1988): iteratively clip each channel to the min of itself and the mean of its \(\pm m\) neighbours; peaks survive, the smooth continuum is estimated.

- Asymmetric Least Squares (AsLS) (Eilers and Boelens 2005): penalized smoother with asymmetric weights — points above the baseline are down-weighted. Two knobs: smoothness \(\lambda\), asymmetry \(p\).

Physical (model-based)

- EELS: power-law \(A E^{-r}\) fitted in a pre-edge window; or plasmon/Drude model.

- EDX: bremsstrahlung continuum (Kramers + detector response + absorption).

- XPS: Shirley / Tougaard inelastic background.

Important

A wrong background biases every downstream feature — peak areas, ratios, latent coordinates. It is a systematic, not random, error: averaging more spectra does not remove it.

[FIGURE: a Raman spectrum with a strong fluorescence background; overlay of SNIP vs AsLS vs a too-stiff polynomial fit, with the resulting baseline-subtracted spectra below]

08. Energy / 2θ Calibration & Spectral Alignment

- The problem. The physical axis is not stable: EELS energy offset drifts (zero-loss wander), XRD \(2\theta\) has zero/sample-height errors, Raman wavenumber depends on laser/grating. A 0.3 eV / 0.05° shift destroys cross-spectrum ML.

Peak-referenced calibration. Anchor to known features:

- EELS: zero-loss peak (0 eV), a known edge onset.

- XRD: a silicon / LaB₆ standard, known reflections.

- Raman: a Si line at 520.7 cm⁻¹.

Warping for non-rigid misalignment.

- DTW — dynamic time warping: optimal monotone alignment of two spectra.

- COW — correlation-optimized warping (Nielsen et al. 1998): piecewise stretch maximizing segment correlation; the chemometrics workhorse.

Important

Misalignment is the silent killer of cross-instrument ML: PCA “discovers” a component that is just the shift; a classifier learns the instrument, not the chemistry. Always align before reduction.

09. Normalization Strategies — and What Each Assumes

| Strategy | Formula | Physical assumption |

|---|---|---|

| Total count / area | \(\mathbf{x}' = \mathbf{x}/\sum_j x_j\) | All variation in total intensity is dose/thickness, not chemistry |

| Max-peak height | \(\mathbf{x}' = \mathbf{x}/\max_j x_j\) | A reference peak is composition-invariant |

| SNV | \(\mathbf{x}' = (\mathbf{x}-\bar x)/s_x\) | Per-spectrum mean & std are nuisance scatter/offset (chemometrics) |

| Reference-peak ratio | \(\mathbf{x}/x_{\text{ref}}\) | An internal-standard line is constant (e.g. matrix element) |

- Normalization is not cosmetic: without it PCA/AE learn dose and thickness, not chemistry (variance is dominated by the largest, least interesting effect).

Important

Every normalization changes the noise model. Total-count normalization correlates channels and breaks the clean Poisson assumption — do it after any variance-stabilizing / Poisson-aware step, not before.

10. Peak Deconvolution & Physically-Constrained Fitting

- Real peaks have physical line shapes: Gaussian (instrumental/Doppler) ⊗ Lorentzian (lifetime) = Voigt / pseudo-Voigt; EELS edges have hydrogenic/ELNES shape.

- Overlap separation = constrained non-linear least squares: shared widths, fixed multiplet ratios, non-negative amplitudes, positions from physics.

- Differentiable forward models \(f_\theta(\text{physics}) \to\) spectrum: fit by gradient descent, drop into autograd → the bridge to physics-informed fitting and to slide 27 (inverse problems).

Note

Applied callout — DAE-assisted EELS. At low dose the Fe-L₂,₃ fine structure separating Fe²⁺/Fe³⁺ is buried in Poisson noise. A denoising autoencoder trained on simulated Fe-L edges (the method is ML-PC u05 §D) is used here purely as a preprocessing denoiser feeding the constrained fit — not as the analysis. Result: clean white-line ratios at ~10× lower dose. The AE is a tool inside box 1–4, not the deliverable.

[FIGURE: two overlapping EELS L₃/L₂ white lines, raw noisy data, the constrained Voigt-multiplet fit with shared width and fixed branching ratio, and residuals]

11. Calibration Transfer Between Instruments

- Industrial reality. A model trained on instrument A fails on B: different detector response, resolution, geometry — even after axis calibration. Re-labelling per instrument is unaffordable.

Piecewise Direct Standardization (PDS) (Wang et al. 1991)

- Measure a few transfer standards on both A and B.

- Learn a banded linear map \(\mathbf{X}_B \approx \mathbf{X}_A \mathbf{P}\) (each B-channel from a local window of A-channels).

- Apply \(\mathbf{P}^{-1}\) to bring B into A’s space → reuse A’s model.

Simpler & more general

- Slope/bias correction — affine fix when only gain+offset differ.

- Domain adaptation — align feature distributions A↔︎B (the DA idea is MFML u09; reference, do not re-derive).

Important

PDS needs only a handful of standards measured on both instruments — orders of magnitude cheaper than re-labelling. The difference between a model that ships to one lab and one that ships to a fleet.

12. Spatial-Spectral Models for Spectrum Images

- A spectrum image is a cube \((x, y, E)\) — STEM-EDS easily \(256{\times}256{\times}2048\), STEM-EELS \(100{\times}100{\times}1024\).

- Naïve: unfold to \(N_\text{pix}\times D\), treat each pixel independently. Throws away that neighbouring pixels are almost the same spectrum.

- Better: exploit spatial correlation — factored 2-D spatial + 1-D spectral convolutions, or a 3-D conv-AE. (The conv-AE architecture is MFML u05 / ML-PC u05 — referenced, not re-derived.)

- Benefit: implicit spatial averaging denoises for free; spatial context separates interface pixels from bulk; learns core-shell / gradient structure.

Important

Trade-off. Spatial smoothing blurs sharp interfaces and can invent mixed spectra at boundaries — choose the receptive field to match the smallest real feature, not the noise.

[FIGURE: (x,y,E) datacube schematic; one slice as a noisy single-pixel spectrum vs the same pixel reconstructed by a spatial-spectral model that borrowed strength from its neighbourhood]

§3 · Decomposition & quantification

13. Reference Recap — Spectral Decomposition (one slide)

Important

Derived elsewhere — results only here. PCA/SVD: MFML u02, ML-PC u02. NMF: MFML u02, ML-PC u05 s28. Autoencoders: MFML u05, ML-PC u05. We do not re-derive any of them.

| Decomposition | Factorization | Spectral interpretation |

|---|---|---|

| PCA / SVD | \(\mathbf{X}\approx \bar{\mathbf{x}} + \mathbf{C}\mathbf{V}^\top\), \(\mathbf{V}\) orthonormal | Eigenspectra = orthogonal variation directions (can be negative — not physical phases) |

| NMF | \(\mathbf{X}\approx \mathbf{W}\mathbf{H}\), \(\mathbf{W},\mathbf{H}\ge 0\) | \(\mathbf{H}\) = end-member spectra (≈ pure phases), \(\mathbf{W}\) = abundance maps |

| AE / conv-AE | \(\mathbf{x}\!\to\!\mathbf{z}\!\to\!\hat{\mathbf{x}}\) | Non-linear latent; handles peak shift, not just mixing |

- The only thing this unit adds: the spectral reading — non-negativity is physical because photon counts and concentrations cannot be negative; orthogonality is a math convenience with no physical mandate.

14. MCR-ALS & Rotational Ambiguity

MCR-ALS — Multivariate Curve Resolution by Alternating Least Squares (Juan et al. 2014): solve \(\mathbf{X}=\mathbf{C}\mathbf{S}^\top+\mathbf{E}\) by alternating non-negative least squares for concentrations \(\mathbf{C}\) and spectra \(\mathbf{S}\).

Rotational ambiguity. For any invertible \(\mathbf{T}\): \[\mathbf{X} = (\mathbf{C}\mathbf{T})(\mathbf{T}^{-1}\mathbf{S}^\top)\] fits equally well. Non-negativity alone leaves a feasible band of solutions, not a unique answer.

Constraints collapse the band:

- Closure — abundances sum to 1 (mass balance).

- Unimodality — a concentration profile has one maximum.

- Known spectra — fix a reference end-member.

- Selectivity / local rank — zones where only one component exists.

Important

Genuinely not in the methods units. NMF is taught there; MCR-ALS + rotational ambiguity + how physical constraints resolve it is the new content.

[FIGURE: feasible band of resolved spectra under non-negativity only (a fan of curves) collapsing to a single curve as closure + unimodality + a known end-member are added]

15. Quantification — From Spectra to Concentrations

- A latent coordinate or a peak area is not a number a metallurgist trusts. Quantification produces at.% with a physical basis.

Cliff-Lorimer (EDX, thin film) (Cliff and Lorimer 1975) \[\frac{C_A}{C_B} = k_{AB}\,\frac{I_A}{I_B}\] Ratio of background-subtracted line intensities × a known \(k\)-factor. Needs the thin-film approximation (no absorption).

ζ-factor method (Watanabe and Williams 2006) \[C_A = \zeta_A \frac{I_A}{\rho t}\cdot(\dots)\] Absolute quantification from first principles; folds in mass-thickness, handles absorption self-consistently — the modern standard.

Important

ML can predict \(I_A\) robustly (denoising, deconvolution); the \(k\)/ζ-factor step is physics. Skipping it and regressing concentration end-to-end discards the physical audit trail a lab/certifier requires.

16. Uncertainty on the Quantification

- A concentration without an error bar is not a deliverable (recall the ML-PC u11 thesis: a threshold decision needs a distribution, not a number).

- Error budget for \(C_A\): counting statistics on \(I_A\) (Poisson) · background-model error (systematic, slide 07) · \(k\)/ζ-factor uncertainty · absorption/thickness uncertainty · ML denoiser bias.

- Propagation. Analytic error propagation through the Cliff-Lorimer / ζ equation for the physical terms; the UQ machinery for the ML-predicted parts is ML-PC u11 (GP CIs, deep ensembles, MC-dropout) — referenced, not re-derived.

Important

The often-dominant term is not counting statistics — it is the background-model systematic (slide 07). Reporting only \(\sqrt{N}\) Poisson error bars is the most common honest-looking lie in the field.

17. Non-Linear Unmixing — When Linear Mixing Fails

- The comfortable model: a boundary pixel is \(\mathbf{x} = \alpha\,\mathbf{e}_A + (1-\alpha)\,\mathbf{e}_B + \boldsymbol{\eta}\) — linear mixing; estimate \(\alpha\) from a latent coordinate. Often fine.

- When linear mixing breaks:

- EELS multiple scattering — thick specimen: losses convolve, not add.

- EDX absorption / fluorescence — emitted X-rays re-absorbed depth-dependently.

- XRD channelling / extinction, preferred orientation — intensities not additive in phase fraction.

- What to do: physical forward model (deconvolve plural scattering; absorption correction) → then unmix; or a non-linear decoder (AE — method in ML-PC u05) whose non-linearity absorbs the curvature. Validate against a known mixture.

Important

A non-linear method fitting a non-linear mixture does not prove it recovered the physics. Always check against a sample of known fractional composition.

§4 · Representation learning for spectra, applied

18. MAE Pretraining on Unlabelled Spectra

The masking trick. Masked Autoencoder (He et al. 2022) on 1-D spectra:

- Split each spectrum into ~64 patches.

- Mask 75%; encode only the visible 25% with a small transformer.

- Lightweight decoder + mask tokens predicts the masked bins.

- Loss = MSE on masked bins only → forced to model spectral structure, not copy.

Pretrain on the lab’s entire unlabelled archive (EELS+Raman+XRD pooled); fine-tune a tiny head on the ~100 labelled spectra.

Why this is the natural materials move. \(10^4\)–\(10^5\) unlabelled spectra rot on the file server; \(10^2\) labelled (labelling = the expensive expert step). MAE converts the unlabelled bulk into a feature extractor.

Note

Rule of thumb. Need \(\gtrsim 10\times\) more unlabelled than labelled spectra before MAE pays off. 200 k pretrain + 100 fine-tune typically beats a from-scratch CNN by 5–15 points small-data.

Method derived in MFML u09 (SSL family). Here: the spectra-specific deployment.

19. Self-Supervised & Foundation Models for Spectra

- MAE (slide 18) is reconstruction-based SSL. Two other families transfer to spectra:

- DINOv2-style (Oquab et al. 2024) — self-distillation / invariance to augmentations (shift, broaden, rescale, add Poisson noise).

- I-JEPA-style — predict latent representations of masked regions, not bins: less low-level, more semantic.

- Spectral foundation models: one encoder pretrained on a huge cross-modality unlabelled corpus, then probed/fine-tuned per task (phase ID, valence, quant features).

Important

Method = MFML u09 (SSL) + ML-PC u09b (transformer backbone). Reference, do not re-derive. Today’s content: which augmentations are physically valid for spectra, and the evidence that pretrained encoders beat from-scratch on small materials sets.

20. Why Unsupervised Compression Can Destroy the Science

Think About This — the PCA rare-phase trap

Question. PCA denoising lets you cut acquisition dose 10×. Why not always do it?

Hint. Think about a feature present in only one or two pixels of a million-pixel map.

Consider.

- Eigenspectra are computed from the whole dataset — they encode the common.

- A rare phase contributes negligible variance → it falls outside the top-\(K\) subspace.

- Truncated reconstruction projects the rare phase away: denoising literally erases the discovery.

- The same logic indicts MAE/SSL latents, NMF with too-few components, aggressive spatial smoothing (slide 12).

Important

Denoising vs. discovery is a fundamental tension, not a tuning issue. Always inspect the residual \(\mathbf{x}-\hat{\mathbf{x}}\) spatially — a rare phase hides there, not in the reconstruction.

21. What the Latent Learns — and Seeding It From Simulation

The latent can rediscover the physics.

- Train a 2-D-latent AE on EDX of a ternary Fe-Cr-Ni alloy; colour latent points by known composition.

- \(c_\text{Fe}+c_\text{Cr}+c_\text{Ni}=1\) → only 2 free composition variables → the latent forms a triangle: the network re-derived the Gibbs ternary with no thermodynamics input.

- Emergent, not programmed — but only if preprocessing (§2) made composition the dominant variance.

Seed it from simulation when labels are scarce.

- Pretrain on simulated spectra, fine-tune on few real ones:

- XRD: Rietveld / structure-factor simulation

- EELS: FEFF / DFT

- EDX: Monte-Carlo (CASINO, DTSA-II)

- Add realistic Poisson + readout noise + drift to the sim.

Important

The sim must capture the right physics (peak shapes, backgrounds, artifacts) or you transfer a simulation accent the real instrument never speaks.

§5 · Applications & operando

22. Case: Automatic XRD Phase Identification

Problem. Given a noisy multi-phase XRD pattern, identify the crystallographic phases. Database (ICDD) peak-matching is manual, slow, and brittle for mixtures, preferred orientation, and broadening.

Pipeline (methods referenced, not re-derived).

- §2 preprocessing: background (SNIP), \(2\theta\) calibration (LaB₆ standard), normalization.

- Encode with an AE/classifier pretrained on simulated patterns for all candidate phases (slide 21).

- Nearest-neighbour / clustering in latent space → phase set; high reconstruction error → amorphous / unknown (slide 20 residual logic).

Why it beats database matching. Robust to peak broadening (size/strain) and preferred orientation that defeat rigid peak-position matching; decomposes mixtures in latent space.

[FIGURE: noisy multiphase XRD pattern; below, resolved phase fractions and a flagged unindexed amorphous halo highlighted via reconstruction residual]

23. Case: EELS Spectrum Imaging — Fe²⁺/Fe³⁺ Mapping

Problem. Map iron oxidation state at nm resolution. The Fe-L₂,₃ white-line ratio (\(L_3/L_2\)) and onset shift (~0.3 eV, slide 04) separate Fe²⁺ from Fe³⁺ — but it is buried in Poisson noise at usable dose.

Pipeline. Power-law background (slide 07) → energy calibration to ZLP (slide 08) → DAE denoise pretrained on simulated Fe-L edges (method ML-PC u05; sim slide 21) → constrained white-line fit (slide 10) → continuous valence via the fitted ratio, not a raw latent (slide 17 caution).

Impact. Oxidation-state mapping at ~5× lower dose; resolves continuous mixed-valence gradients at interfaces; validated against Fe²⁺/Fe³⁺ reference standards (slide 16 discipline).

24. Case: Large-Scale EDS Maps — the Throughput Story

The scale challenge (the point of the slide). \(512{\times}512{\times}2048 \approx 5\times10^5\) spectra per field; multiple fields → \(10^6\)–\(10^7\) spectra per sample. The bottleneck is engineering, not method novelty.

Why PCA, specifically, here. Linear, one-pass, \(O(\min(N,D)^2)\), deterministic, streamable (incremental SVD). Denoise via top-\(K\) reconstruction → K-means in score space → map clusters back to \((x,y)\) → phase map in minutes on a workstation. (Method: ML-PC u02 — referenced.)

Engineering reality. Memory-mapped datacubes, chunked/out-of-core SVD, GPU only where it pays. The win is throughput at fixed accuracy, not a better model.

Important

And it inherits slide 20’s caveat: trace elements below the noise floor and rare phases can be denoised away. Keep a residual/anomaly pass alongside the phase map.

25. Discovery via Anomaly — the Workflow

- Train a representation on spectra from known phases only (clean nominal set — the prerequisite, ML-PC u05 §E).

Workflow:

- Apply to a new sample; most pixels reconstruct well.

- Map reconstruction error spatially → anomaly map (the slide-20 residual, used deliberately).

- Extract spectra from high-error regions; inspect physically.

- Identify the unknown phase; add to training; retrain.

- Example. AE trained on two base metals of a diffusion couple; a high-error band at the interface revealed an unexpected intermetallic invisible in the denoised map.

Important

The AE-anomaly method is ML-PC u05 §E (threshold from nominal validation error, never from anomalies). This slide is the materials discovery workflow wrapped around it.

26. Operando / Streaming Spectral Monitoring

- Operando: time-resolved XRD / Raman / EELS during synthesis, cycling, heating, catalysis — a spectrum stream, not a static cube.

New problems the stream creates

- Drift over time — the nominal distribution itself moves; a fixed threshold goes stale.

- Novelty detection online — a new phase appearing is the result.

- Adaptive acquisition — spend dose where the spectrum is changing.

Beam-damage / dose-fractionation

- Track spectral change vs accumulated dose; stop or fractionate before the probe alters the sample (recall slide 23: beam-induced Fe³⁺→Fe²⁺).

- Poisson model (slide 03) sets the detectable-change floor per frame.

Important

Methods referenced: AE-anomaly ML-PC u05 §E; Poisson noise ML-PC u02. New here: time — non-stationarity, online thresholds, dose as a budgeted resource.

27. Spectral Inverse Problems — EXAFS → Local Structure (stretch)

- Some spectra are an encoded structure, not a feature list. EXAFS oscillations \(\chi(k)\) are a sum over scattering paths → Fourier-related to a radial distribution (neighbour distances, coordination numbers).

- This is a modality-specific inverse problem: recover the structure that generates the spectrum via a known forward model (FEFF), not a generic decomposition.

- ML role: a differentiable / surrogate forward model (seeded on slide 10’s idea) for fast amortised inversion + uncertainty — the general inverse-problem theory is ML-PC u08; reference it, do not re-derive.

Important

An inverse problem can be ill-posed: many structures fit one spectrum. Same disease as MCR rotational ambiguity (slide 14) — physical constraints (known coordination chemistry, path filtering) are the cure.

Wrap

28. Key Takeaways — It Was Never About the Method

- The signal’s structure is dictated by the probe physics — that, not the algorithm, dictates the pipeline.

- Preprocessing dominates: a wrong background/calibration is a systematic error no model fixes (slides 06–11).

- Identifiability is the recurring disease — peak overlap (10), MCR rotational ambiguity (14), inverse ill-posedness (27); physical constraints are the recurring cure.

- A latent is not a quantity: physics + a reference standard turn it into a number with an honest error budget (15–17).

- Unsupervised compression erases the rare — denoising vs discovery; live in the residual (20, 25, 26).

- SSL/MAE turns the unlabelled archive into a feature extractor — if augmentations are physically valid (18, 19).

Important

The methods live in MFML u02/u05/u09 and ML-PC u02/u05. This unit was about what the signal physics demands. Next: Unit 10 — Transformers for materials.

Continue

29. References

Preprocessing & chemometrics

Quantification

Representation learning (referenced — derived in MFML u05/u09)

© Philipp Pelz - Machine Learning in Materials Processing & Characterization